The AI Operating Model: How to Scale, Govern, and Win in the AI-First Era

Early wins with AI, like a sharp pilot program or a clever proof of concept, are easy to highlight. But they can also become a comfort zone that slows progress. As AI adoption accelerates and tools become widely available, the real differentiator won’t be the models you buy, but the organizational muscle you build to deploy them at scale.

At the moment, many companies still treat AI as if it were a plug-in. They buy platforms, run pilots, and assume transformation will follow. Yet according to ProSci, while 65% of companies have launched AI pilots, only 11% have scaled them across the enterprise. What’s missing is an engine that turns experimentation into enterprise-level integration.

To truly transform, companies need to move beyond pilots and embrace an AI Operating Model (AIOM). This means re-engineering decision-making, workflows, and accountability so AI is embedded into the DNA of the organization rather than bolted on as an afterthought. Yes, there are risks, but the bigger risk is allowing competitors to build AI-driven capabilities while you’re still refining experiments.

The organizations that succeed won’t treat AI as just a set of tools — they’ll treat it as a capability: governed, measured, and scaled with the same discipline as finance, operations, or customer management. Everyone else will stay stuck running demos.

“In AI, the companies that succeed won’t be the ones with the most sophisticated models; they’ll be the ones who empower their people and build the muscle to use them effectively.”

What Is an AI Operating Model (AIOM)?

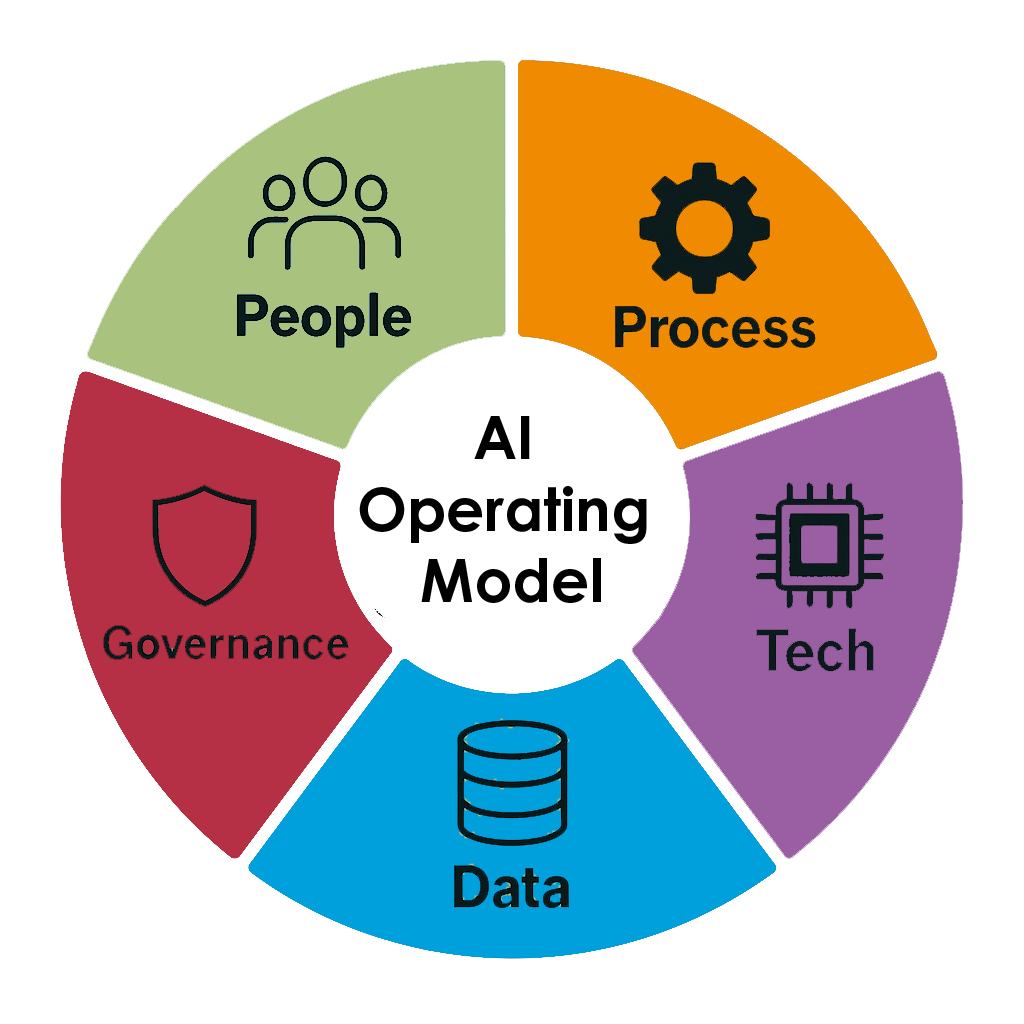

An AI Operating Model is more than a framework — it’s the nervous system of an AI-enabled enterprise. It connects people, processes, technology, data, and governance so AI can move seamlessly from ideas to execution to sustained impact.

Unlike traditional approaches that bolt AI onto existing structures, an AIOM acknowledges that AI requires fundamentally different ways of working. It creates a capability that must be embedded across decision-making, data flows, and workflows — not just another layer of technology.

Think of AI as a relay race. Business, data, governance, and technology all carry the baton. If one handoff falters, the race is lost. But with an effective AIOM, you’re not just running faster — you’re redesigning the track to create new paths your competitors can’t follow.

How AIOMs Differ From Other Operating Models

Traditional operating models were designed for predictable, manual processes. They assume standard inputs, consistent workflows, and decisions made by people. Digital operating models evolved to optimize digital channels and automation, but they still largely rely on sequential, process-driven logic.

AI operating models are different. They’re designed for adaptive learning, human–AI collaboration, and enterprise-wide flexibility. They recognize that AI systems learn and evolve, that data quality affects outcomes in real time, and that human oversight has to be embedded throughout the process, not just at the end.

The difference shows up in how roles are structured, how governance works, and how performance is measured. In traditional models, you optimize for efficiency and control. In AIOMs, you optimize for learning, adaptation, and business value.

Benefits of an AI Operating Model

Companies that get this right:

- Move faster in response to changes in tools or regulations

- Achieve consistent, scalable AI outcomes

- Align experimentation with business strategy, reducing duplication

- Create a culture where AI is owned across the business, not by a few specialists

- Turn individual successes into repeatable, compounding advantage

Without an AIOM, the opposite occurs: AI efforts run in silos, data quality becomes an afterthought, governance shows up too late, metrics stay undefined, and accountability is unclear. The result is expensive pilots that never scale, frustrated teams, and eroding confidence in AI’s potential.

With an AIOM, AI shifts from being a series of projects to becoming a repeatable enterprise advantage that compounds over time.

Signs You May Need an AI Operating Model

The warning signs often appear in subtle but telling ways:

- Disconnected AI projects: Multiple teams working on similar problems without coordination

- No clear ownership: Uncertainty about who is accountable for outcomes

- Governance gaps: Risk and compliance teams only involved at the end

- Vendor dependence: Heavy reliance on external providers instead of building internal capability

- Slow time-to-value: Nine to twelve months (or more) to see business impact

- Overhype and underdelivery: Internal credibility of AI slipping after repeated failures to scale

If no one in your organization can clearly state who owns an AI use case, where the data originates, or how success is measured, then your AI Operating Model is either missing or ineffective.

“If your AI Operating Model isn’t in place, even the smartest tool will stall.”

Core Components of an AI Operating Model

An effective AIOM works across five interlinked dimensions:

| Component | Focus Areas |

|---|---|

| People | Change leadership, AI fluency, workflow integration, role clarity |

| Processes | Governance, feedback loops, lifecycle from business case to ongoing operations |

| Technology | Tool alignment, enablement teams, scalable infrastructure |

| Data | Quality, lineage, discoverability, stewardship roles |

| Governance | Proactive, tiered, enabling — not just risk-averse |

Technology and data often receive the funding, but without the right people and process support, change won’t stick. AIOMs balance all five areas.

Core Design Principles

Successful AI Operating Models are grounded in a set of design principles that influence every choice:

- Human–AI synergy: Design for collaboration, not replacement.

- Responsible by design: Build ethics and risk management in from the start.

- Scalable by default: Architect with growth in mind.

- Experimental at the core: Balance innovation with discipline.

- Transparency and trust: Ensure explainability, communication, and adoption.

Common AIOM Challenges

The hardest part of implementing an AIOM usually isn’t the technology — it’s the organizational side. Common pitfalls include:

- Tech-first, design-last: Investing in powerful tools without integrating them into workflows. Without change planning, capabilities stay disconnected. AI projects run solely by IT teams have a 50% lower success rate.

- Assuming AI will “do the work”: AI is an amplifier, not an autopilot. It enhances human judgment, but it doesn’t replace it.

- Leadership disengagement: Leaders who either overpromise AI’s short-term impact or underfund organizational change create unworkable conditions.

- Persistent silos: Teams work on similar problems independently, wasting resources and missing shared learning.

- Friction at handoffs: Business, data, and governance teams misalign on requirements or constraints, leading to delays and rework.

- Poor change management: Teams are introduced to new tools without clear training or integration, leading to resistance or misuse.

The reality: you can’t bolt AI onto broken processes and expect scale.

The rule: speed to value is critical. If your AI takes a year to show results, it’s already lagging behind.

Stakeholders in a Successful AIOM

A strong AI transformation depends on a well-orchestrated set of roles:

- Executive sponsors: Align AI to strategy and risk appetite. Without genuine sponsorship, initiatives lack the authority for cross-functional change.

- AI experts: Bridge technical and business needs, translating in both directions.

- Domain experts: Ensure AI fits real-world workflows and data constraints.

- Change champions: Drive adoption, manage resistance, and influence networks.

- Partners: Provide delivery support and accelerate capability-building.

AI literacy is not just for data scientists. Business leaders need fluency too — not in algorithms, but in AI’s capabilities, limitations, and integration requirements. When leaders understand AI well enough to ask the right questions, initiatives move faster and adoption is higher.

Centralized, Decentralized, or Federated Models

The structure of your AIOM should fit your maturity, culture, and priorities:

- Centralized models: Useful in early stages. They provide standards and expertise, but risk creating bottlenecks.

- Decentralized models: Best for mature companies with strong AI literacy. They allow rapid rollout and domain-specific optimization but risk inconsistency.

- Federated models: Blend centralized governance with local execution. They balance consistency and agility, making them a strong fit for large enterprises.

Many organizations begin with a centralized approach and evolve toward federated as maturity increases. The choice isn’t permanent — operating models evolve as skills, risks, and ambitions change.

AI Maturity Levels

Progression depends not just on technical skill but also on organizational readiness:

| Maturity Level | Description | Typical Patterns |

|---|---|---|

| Ad Hoc | Disconnected pilots, no coherent strategy | AI seen as side projects, often vendor-driven |

| Foundational | Centralized governance, basic structures in place | A central team owns tools, standards, and pilot intake |

| Integrated | Scaling across domains, federated teams | Business units take ownership of use cases, supported by central experts |

| AI-Native | AI embedded into all workflows and decision-making | AI seen as part of “how work gets done,” not as a separate capability |

Key Diagnostic Questions

- Who owns each AI use case?

- Is the data clean, governed, and accessible?

- What does success look like, and how is it measured?

- What percentage of pilots scale beyond proof-of-concept?

- How fast is time-to-value, from idea to impact?

- Is AI embedded in core workflows?

- Do innovation and operations connect seamlessly?

- Are governance and risk management engaged early enough to enable safe scaling?

These answers form the baseline for designing or evolving your AIOM. Companies that regularly revisit these diagnostics find blind spots faster and adjust before problems spread.

Are you ready for AI?

Afiniti’s 6LeverTM AI readiness assessment will reveal areas you need to focus on to maximize your chance of successful AI adoption in just.5 minutes.

Aligning on strategic direction

Define clear outcomes and priorities for AI from the outset. Without strategic alignment, AI initiatives become technology-driven experiments rather than business-driven capabilities.

Successful organizations identify how AI aligns to specific business themes: cost reduction, operational excellence, customer experience enhancement, innovation acceleration, or risk mitigation. This alignment provides a filter for prioritization and a framework for measuring success.

Governance and Risk

“Governance is not a barrier — it’s the enabler for safe and scalable experimentation.“

AI introduces risks that traditional frameworks don’t fully address, from hallucinations to regulatory volatility to cybersecurity gaps. The key is not to eliminate risk but to define your organization’s risk tolerance.

Different applications demand different safeguards. A customer-facing chatbot requires different controls than an internal automation tool. Governance should be tiered to match oversight with risk level. Low-risk use cases shouldn’t face the same approval process as high-risk ones.

Separate sandbox environments from scaled deployments. Give teams freedom to experiment, but establish clear triggers for when governance must engage. This supports innovation while protecting the enterprise.

Effective governance blends:

- Tiered review processes for low-, medium-, and high-risk applications

- Embedded risk checks at intake and design stages

- Clear data stewardship and ownership roles

- Transparent decision-making so business leaders know why a use case is approved, delayed, or rejected

When done right, governance doesn’t slow teams down. It accelerates them by removing uncertainty and clarifying the path to scale.

Execution: Roadmap and Change Plan

Deploying and embedding an AIOM requires a roadmap that is people-centered and milestone-driven:

- A compelling AI vision story: Link AI capabilities to business outcomes in language that resonates across the organization. Communicate both opportunities and risks honestly, so teams see how their contributions matter.

- Tailored change interventions: Different groups face different challenges. A finance team worried about compliance won’t need the same training as a product team focused on innovation. One-size-fits-all change plans fail.

- Upskilling and continuous learning: AI fluency must evolve with technology. This requires ongoing training, peer networks, and hands-on experimentation — not one-off events.

- Feedback loops: Monitor adoption, measure performance, and adjust quickly. Feedback shouldn’t just come from executives but from the people actually using AI in workflows.

- Integration with performance management: Ensure AI adoption and results are built into KPIs, so teams see that using AI isn’t optional.

Success comes not from building the model, but from embedding it into daily work. The roadmap and change plan make that embedding systematic and sustainable.

Case Study: From Pilot Paralysis to Enterprise Scale

A major pharmaceutical company invested heavily in AI. They launched numerous R&D pilots and built a digital innovation board. Yet few initiatives moved beyond proof-of-concept.

The problems weren’t technical — they were organizational:

- No clear ownership. Multiple stakeholders were interested, but no one was accountable.

- Data responsibilities unclear. Quality and access issues emerged late in projects.

- Governance too late. Risk and compliance were engaged only at deployment, causing delays and rejections.

- Pilots left in limbo. Business units didn’t know when or how to take over ownership.

The Solution: Federated AIOM

The company co-created a federated AIOM to fix these gaps:

- Accountable business owners for each use case, responsible for adoption and results

- Data product stewards with defined responsibilities for quality, access, and governance

- AI enablement team to provide technical expertise and scaling support

- Process framework guiding initiatives from intake through feasibility, risk review, and embedding into operations, aligned to five strategic themes

- Integrated governance: Risk and compliance embedded early, preventing last-minute surprises

- Clear exit criteria for pilots, so no project stalled indefinitely

The Results

- Three AI use cases scaled within six months

- Stronger cross-functional trust, reflected in adoption metrics and better collaboration across business, data, and governance

- AI integrated into protocol design and embedded end-to-end in workflows

- A repeatable framework that allowed subsequent use cases to scale faster with fewer roadblocks

This company didn’t have a technology problem; they had an operating model problem. By redesigning organizational ownership, governance, and processes, they unlocked the full potential of their technical investments.

Trends in AIOMs

We’re seeing the emergence of layered AI Operating Models that address AI deployment as a multi-dimensional challenge:

- Business Value Layer: AI driven by business outcomes, with clear ownership and metrics

- Data Management Layer: Based on data mesh principles — domain ownership, quality standards, discoverability

- Governance Layer: Governance embedded throughout, not just at the end

Together, these layers create a lifecycle framework: innovation intake → technical feasibility → risk review → operational embedding. The payoff: reduced time from proof-of-concept to scale, sometimes by months, because all dimensions are addressed simultaneously.

This approach is already being built with some of the world’s most AI-ambitious companies. Those that master it will deploy AI faster, safer, and more effectively than competitors who still treat it as a technology issue.

AI Operating Model First Steps

If you’re ready to move beyond pilots and into enterprise-wide value, start by assessing your maturity honestly. Ask:

- Who owns your AI outcomes?

- How clean and accessible is your data?

- What does success look like, and how do you measure it?

- What percentage of pilots scale?

- How fast is time-to-value?

- Where are governance and risk engaged in the lifecycle?

The advantage of the next decade won’t come from better algorithms. It will come from stronger operating models. The companies that act on this will shape the future of their industries.

For support in designing and deploying your AI Operating Model — no matter your goals or maturity level — Afiniti can partner with you to accelerate adoption, reduce risk, and maximize value. Get in touch today.

To get the latest change tips, advice and guidance directly to your inbox, sign up to our monthly Business Change Digest.